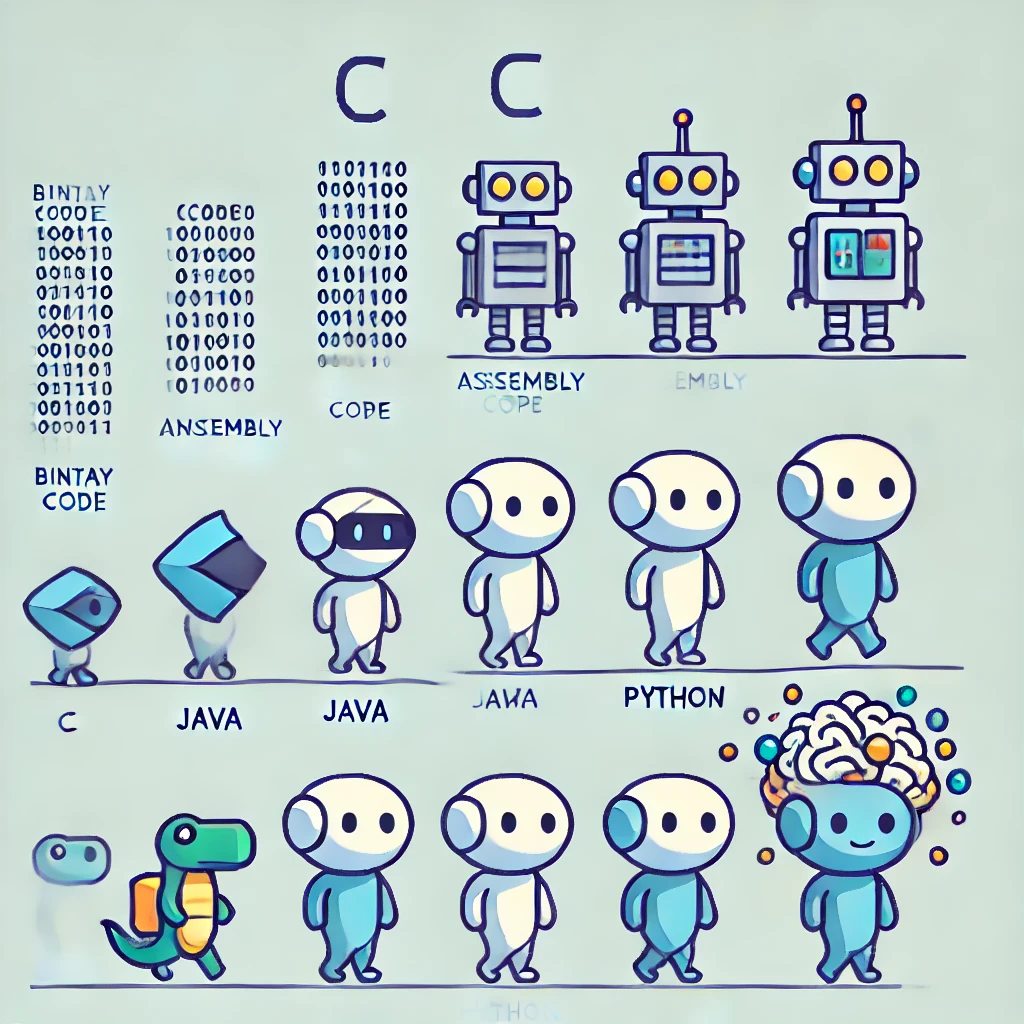

From 1+1 in Assembly to LLMs: The Evolution of Computing Abstraction

Tracing the Layers from Machine Code to Natural Language Interfaces

Computing has come a long way since the early days of punch cards and assembly language.

With each new generation of programming paradigms, we’ve added layers of abstraction

that make it easier for humans to interact with machines. In this post, we’ll explore

how a simple operation like 1 + 1 is handled across different programming languages

and models—from assembly to modern Large Language Models (LLMs). We’ll see how each

layer adds complexity under the hood but offers significant benefits to developers and

users alike.

1. Assembly Language: The Birthplace of Computing

In the earliest computers, programmers wrote code directly in assembly language, which is a low-level language closely tied to the machine’s hardware. Each instruction in assembly corresponds directly to machine code, which the CPU executes.

How 1 + 1 Works in Assembly

Here’s how you might perform 1 + 1 in x86 assembly:

section .data

result db 0 ; Reserve a byte for the result

section .text

global _start

_start:

mov al, 1 ; Load 1 into the AL register

add al, 1 ; Add 1 to the value in AL

mov [result], al ; Store the result in memory

; Exit program (system-specific code omitted for brevity)- Registers: Small storage locations within the CPU hold the data.

- Instructions: Operations like

movandaddcorrespond directly to machine code. - Memory Management: Programmers manually manage memory and CPU registers.

Benefits:

- Efficiency: Code runs very fast because it’s closely tied to hardware.

- Control: Offers granular control over hardware resources.

Drawbacks:

- Complexity: Difficult to read, write, and maintain.

- Error-Prone: High chance of bugs due to manual management.

2. C Language: Introducing Compilation

To simplify programming, higher-level languages like C were developed. C provides a layer of abstraction over assembly, allowing developers to write more readable code that gets compiled into machine code.

How 1 + 1 Works in C

#include <stdio.h>

int main() {

int result = 1 + 1;

printf("1 + 1 = %d\n", result);

return 0;

}- Compilation: The C code is compiled into machine code before execution.

- Variables and Types: Introduces variables (

int result) and data types. - Standard Libraries: Provides functions like

printffor input/output operations.

Benefits:

- Readability: Easier to understand and maintain than assembly.

- Portability: Can be compiled on different hardware architectures.

- Efficiency: Compiled code runs nearly as fast as assembly.

Drawbacks:

- Manual Memory Management: Developers still need to manage memory (e.g., malloc, free).

- Complex Syntax: While simpler than assembly, C can still be complex for beginners.

3. Java: Embracing Object-Oriented Programming

Java is one of the most widely used object-oriented programming (OOP) languages, adding another layer of abstraction. It further simplifies coding by organizing data and behavior into objects and classes.

How 1 + 1 Works in Java

public class Addition {

public static void main(String[] args) {

int a = 1;

int b = 1;

int c = a + b;

System.out.println("1 + 1 = " + c);

}

}- Classes and Objects: Code is organized into classes (

public class Addition). - Compilation to Bytecode: Java code is compiled into bytecode for the Java Virtual Machine (JVM).

- JVM Execution: The JVM interprets or JIT-compiles bytecode into machine code at runtime.

Benefits:

- Platform Independence: Bytecode runs on any system with a JVM.

- Memory Management: Automatic garbage collection reduces memory leaks.

- OOP Features: Inheritance, encapsulation, and polymorphism improve code reuse and organization.

Drawbacks:

- Performance Overhead: JVM adds overhead compared to native machine code.

- Increased Complexity: Additional layers can make debugging more complex.

4. Python: The Rise of Interpreted Languages

As of 2024, Python has become one of the most popular programming languages, especially for scripting and rapid application development.

How 1 + 1 Works in Python

a = 1

b = 1

c = a + b

print(f"1 + 1 = {c}")- Interpreted Execution: Python code is interpreted line by line at runtime.

- Dynamic Typing: Variables don’t require explicit type declarations.

- High-Level Abstractions: Simplifies many complex programming tasks.

Benefits:

- Ease of Use: Simple syntax makes it accessible for beginners.

- Rapid Development: Quick to write and test code.

- Extensive Libraries: Rich ecosystem for various applications (web development, data science, etc.).

Drawbacks:

- Performance: Slower execution compared to compiled languages.

- Less Control: Abstracts away hardware details, limiting optimization

5. Large Language Models (LLMs): Conversational Computing

Now, we have reached an era where you can ask an LLM like ChatGPT to compute 1 + 1.

How 1 + 1 Works in an LLM

-

Natural Language Input: You type

"What is 1 + 1?"in plain English. -

Tokenization: The input is broken down into tokens (e.g.,

["What", "is", "1", "+", "1", "?"]). -

Embeddings: Each token is converted into a high-dimensional vector (e.g., 512 dimensions).

-

Self-Attention and Transformers: The model processes these vectors through multiple layers to understand context and relationships between tokens.

-

Generating Logits: For each possible token in the vocabulary, the model generates a score called a logit.

Mathematically, for the final hidden state , the logits are computed as:

where:

- is the weight matrix, including the bias, mapping to the vocabulary size. The shape of this matrix is .

- is the size of the vocabulary.

-

Softmax Function: The logits are converted into probabilities using the softmax function:

where:

- is the probability of token .

- is the logit corresponding to token .

-

Sampling from the Categorical Distribution: The model samples the next token based on the probabilities. In this case, the token

"2"has a much higher probability than other tokens, so it’s most likely to be selected. -

Next Token Prediction: The model outputs

"2", completing the response.

Benefits:

- User-Friendly: Interact with machines using natural language.

- Versatility: Can perform a wide range of tasks beyond arithmetic.

- Accessibility: Lowers the barrier to entry for non-programmers.

Drawbacks:

- Computational Complexity: Even for simple tasks like

1 + 1, LLMs involve extensive computations, including numerous matrix multiplications. This requires significant computational resources, often involving specialized hardware like GPUs. - Lack of Symbolic Reasoning: LLMs predict based on learned patterns in data, not actual calculations. They don’t have an Arithmetic Logic Unit (ALU) or modules specifically designed for computation.

- Potential for Errors: May provide incorrect answers if the pattern isn’t well-represented in the training data.

The Future of AI Beyond LLMs

While LLMs are powerful, they have limitations due to their inability to perform explicit computations or symbolic reasoning. Researchers are exploring ways to address these weaknesses:

-

Integrating External Tools: Some LLMs can generate and execute code (e.g., Python scripts) to perform calculations, effectively outsourcing computation tasks to specialized modules rather than relying solely on text prediction.

- Example: An LLM might produce code like

print(1 + 1)and execute it to get the result2.

- Example: An LLM might produce code like

-

Hybrid Models: Combining LLMs with other AI systems that specialize in symbolic reasoning or mathematical computation to enhance overall capabilities.

-

Advancements in Model Architectures: Developing new architectures that incorporate computation modules or improve reasoning abilities within the model itself.

This example, although simple, involves a lot of computation due to the extensive matrix operations in the transformer architecture. As a result, running LLMs typically requires specialized hardware with significant processing power.

The Common Denominator: Transistors and Logic Gates

Despite the increasing layers of abstraction, all these computations ultimately run on

silicon-based transistors (although we might move away from silicon in the future)

using logic gates to process binary data (1s and 0s).

- Machine Code: The lowest level, consisting of binary instructions executed by the CPU / GPU.

- Abstraction Layers: Each new programming paradigm adds a layer, making it easier for humans but adding complexity under the hood.

- Evolution Purpose: The primary goal is to make programming more accessible, efficient, and aligned with human thinking.

Why Add More Abstraction Layers?

- Human-Centric Design: Abstractions make it easier for developers to write, read, and maintain code.

- Productivity: Higher-level languages reduce development time and errors.

- Innovation: Simplifying programming enables more people to create complex applications, driving technological advancement.

Conclusion: Bridging the Gap Between Humans and Machines

From manually coding in assembly to interacting with machines using natural language, we’ve significantly bridged the gap between humans and computers. While each layer of abstraction adds complexity beneath the surface, the benefits in usability, productivity, and accessibility are undeniable.

What a time to be alive!

- No More Punch Cards: We’ve moved far beyond the days of inputting binary code manually.

- Natural Language Interfaces: LLMs allow us to communicate with machines as we would with another person.

- Focus on Innovation: Developers can focus on solving complex problems without worrying about low-level implementation details.

As technology continues to evolve, we can expect even more intuitive ways to interact with machines, making computing accessible to an even broader audience.