I've been spending a lot of time thinking about memory systems for AI agents. A big part of that work is not just the model, but the storage layer underneath it. I want something t...

Learn more

Learn more

Notes, essays, and research fragments.

I've been spending a lot of time thinking about memory systems for AI agents. A big part of that work is not just the model, but the storage layer underneath it. I want something t...

Learn more

Learn more

Motivation “Knowledge graph” gets used everywhere. This post pins down a minimal formal meaning, relates it to a widely cited definition from Hogan et al. (2020), and compares RDF...

Learn more

Learn more

Motivation: Why On Policy RL Matters for Modern AI If you've been following the latest developments in large language models (LLMs), you've probably heard of GRPO (Group Relative P...

Learn more

Learn more

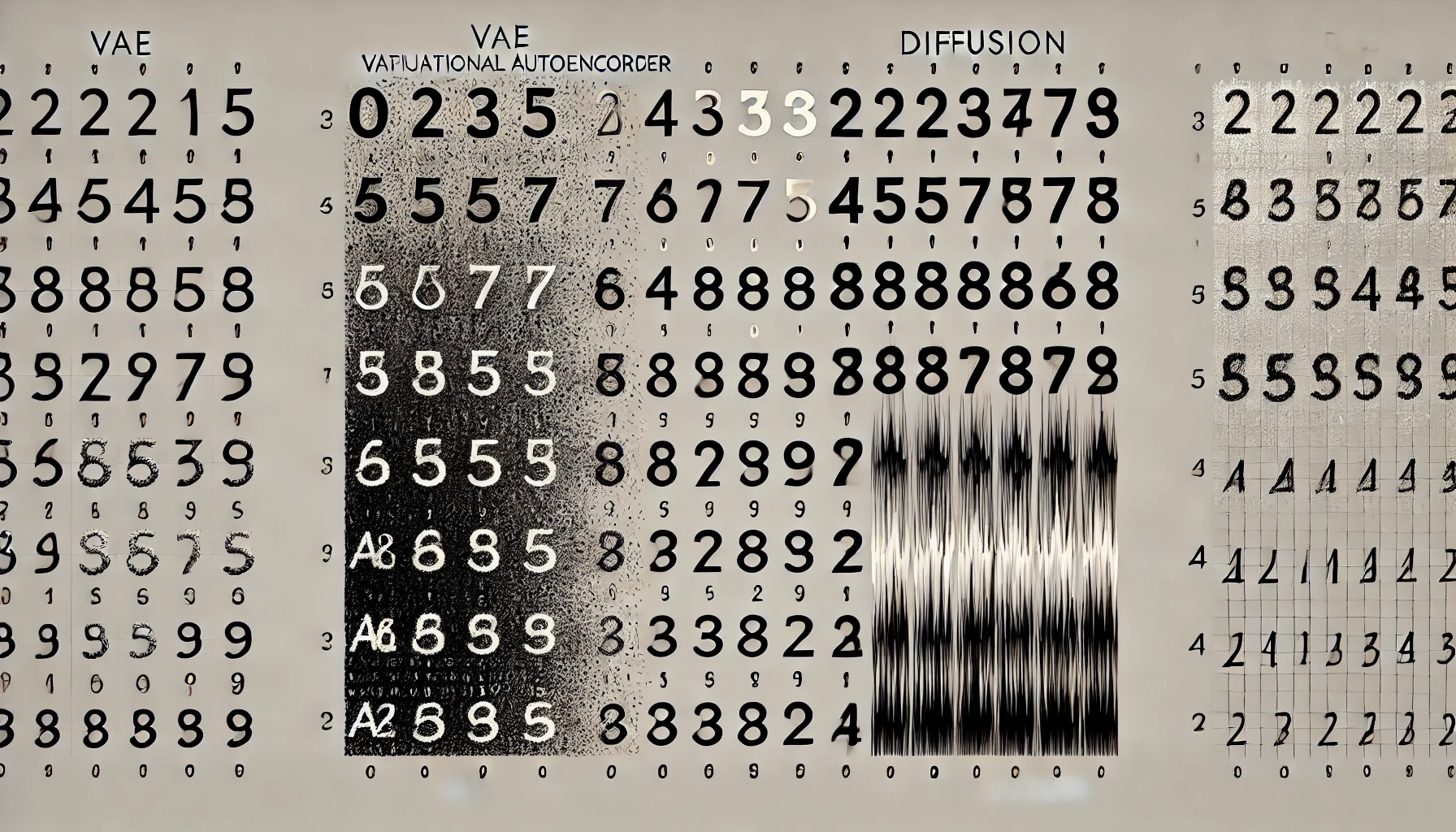

Motivation: Why Do We Need Generative Models? In order to generate data, we need to sample from a distribution. But here, we are not talking about simply sampling from the training...

Learn more

Learn more

Modern Natural Language Processing (NLP) revolves around language modeling —the art of predicting the next token given the previous ones. Formally, if we have a sequence of tokens...

Learn more

Learn more

Large Language Models (LLMs) have brought a new twist to the way we think about training algorithms. While traditional Supervised Learning (SL) and Reinforcement Learning (RL) migh...

Learn more

Learn more

Most of us, whether consciously or not, use Maximum Likelihood Estimation (MLE) in our daily machine learning workflows. When you’re training a model to predict labels in a supervi...

Learn more

Learn more

Sequential decision making is a fundamental challenge in machine learning and AI. From planning your next vacation itinerary to training a robot to navigate a warehouse, we often f...

Learn more

Learn more

Computing has come a long way since the early days of punch cards and assembly language. With each new generation of programming paradigms, we've added layers of abstraction that m...

Learn more

Learn more

Statistical significance testing, specifically the use of p values, has been the cornerstone of hypothesis testing for decades. However, this frequentist approach has critical flaw...

Learn more

Learn more

In recent years, Transformers have dominated the field of natural language processing (NLP), while Graph Neural Networks (GNNs) have proven essential for tasks involving graph stru...

Learn more

Learn more

Supervised Learning Objective: Maximum Likelihood In supervised learning , the goal is to learn a function that maps an input to a label . This is typically done by maximizing the...

Learn more

Learn more

Llama 3.1 was released by Meta a month ago, and you can easily access it . I used their quite extensively a few years ago, and haven't used it for some years. It was already an ama...

Learn more

Learn more

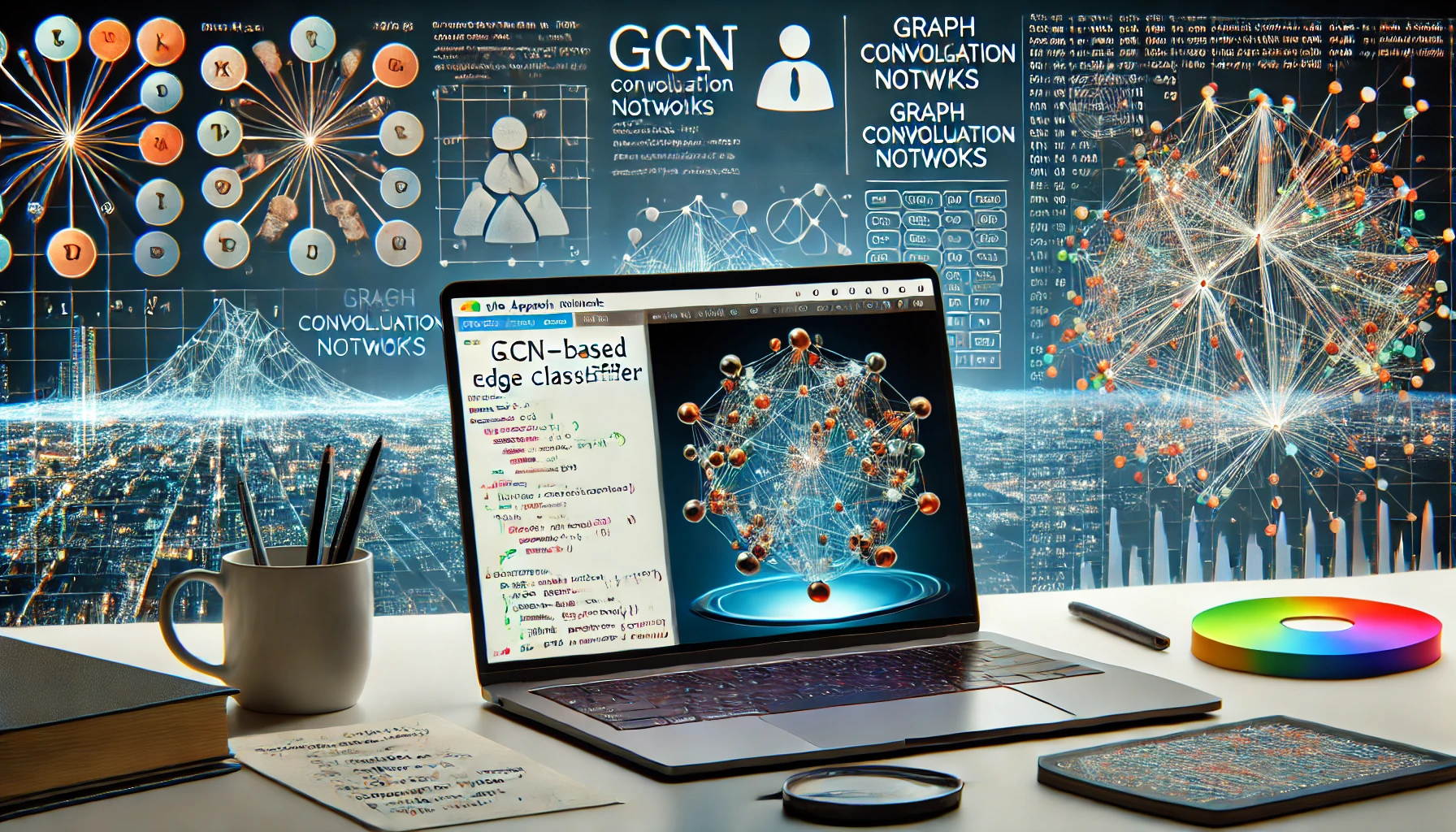

In this post, I'll walk you through the process of training a Graph Convolutional Network (GCN) for edge classification, a common task in graph based machine learning applications....

Learn more

Learn more

The project is the core of my PhD work. It was heavily inspired by the cognitive science theories, such as the ones from . It's about developing agents equipped with human like ext...

Learn more

Learn more

Generating images with text I've been using it massively for everything lately. It's also integrated into VS Code, which really helps me to write code faster. Below is an example o...

Learn more

Learn more

I thought that I can make a better website than the one I had. So I made this one! I'll be posting my projects and other stuff here. I hope you like it! :) I was surprised that I c...

Learn more

Learn more

This paper was a result of the Hugging Face BigScience research workshop that I participated in 2021. The paper can be found at . Abstract : Large language models (LLMs) have been...

Learn more

Learn more

This paper was a result of the Hugging Face BigScience research workshop that I participated in 2021. The paper can be found at . Abstract : PromptSource is a system for creating,...

Learn more

Learn more

This paper was a result of the Hugging Face BigScience research workshop that I participated in 2021. The paper can be found at . Abstract : Large language models have recently bee...

Learn more

Learn more

This is a paper that I wrote together with . We were able to achieve SOTA back then by simply training a RoBERTa (a variant of BERT) with speaker tokens! Check out Abstract : We pr...

Learn more

Learn more

I trained a simple age gender classification model using ArcFace embeddings and MLPs. Check out the paper and the code Multilayer Perceptrons (MLPs) are foundational neural network...

Learn more

Learn more